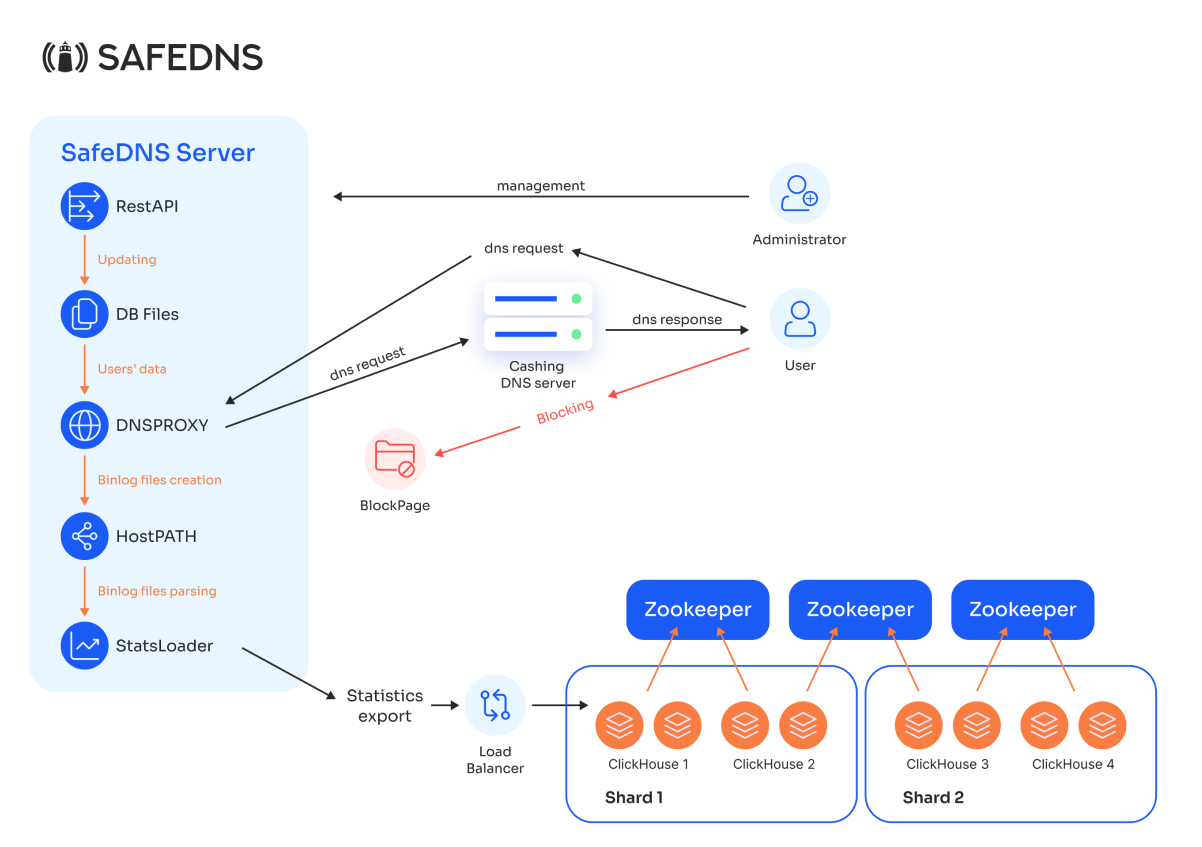

4. Components

Our solution consists of the following components:

- DNS Proxy Module: Receives DNS requests and returns responses in the form of IP addresses.

- Internal Database: Stores information about all users and filtering policies.

- RestAPI: Used to update the Database with:

- Identifier (subnet/IP/port)

- Filtering profile

- Block page

- Creation/modification of filtering profiles (specifying which categories should be blocked)

- Creation/modification of block pages

- Creation/updating of users or user groups, including:

- Block Pages

- Binary Log Parsing Module for DNS proxy (StatsLoader)

- Statistics Export Module to Clickhouse DBMS.

Explanation of other elements of the setup:

Client: Represents the end user who will use the DNS filtering service. The client sends DNS requests to SafeDNS Shield.

DB Files: This is the database that stores information about clients and filtering rules. RestAPI retrieves information from this database.

DNS Proxy: This is the core component of the DNS filtering process. It receives DNS requests from the client, applies filtering rules based on the data from DB Files, and then forwards the requests to the caching DNS.

Caching DNS Server: This is a cache server deployed on the client’s side. It performs the actual resolution of DNS requests after they have been filtered through the DNS Proxy.

HostPATH: After processing DNS requests, the DNS Proxy generates binlog files (log files containing information about DNS queries). HostPATH is responsible for storing these binlog files.

StatsLoader: Once the binlog files are stored, StatsLoader retrieves and analyzes the binary data from HostPATH, processes the statistics, and sends them to the ClickHouse cluster for storage and analysis.

Load Balancer (LB): StatsLoader sends processed statistics to the load balancer, which distributes them across ClickHouse nodes.

ClickHouse Cluster: This is a distributed database used for storing and analyzing DNS request statistics. The cluster is divided into shards, with each shard containing multiple ClickHouse nodes for fault tolerance (mirroring). Shard 1 and Shard 2 are designed for high performance, as reading and writing are done in parallel.

Zookeeper: These instances manage the configuration and coordination of the ClickHouse cluster, ensuring data consistency and the reliability of the distributed system.